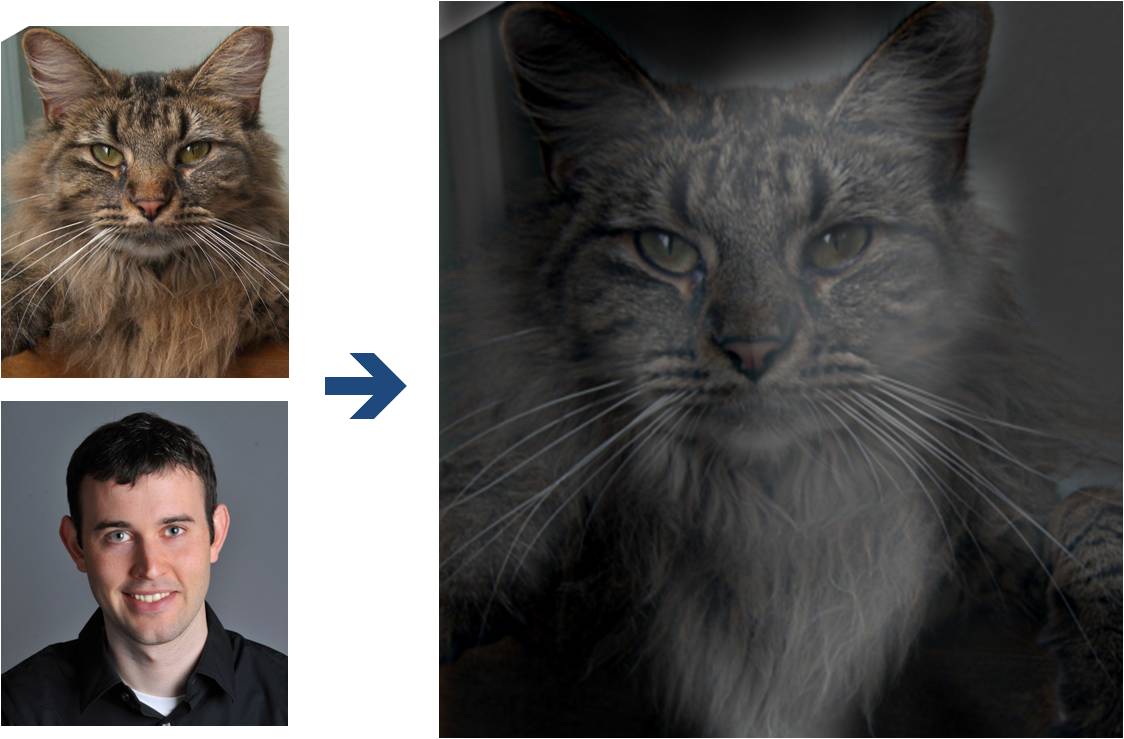

This part of the project is intended to familiarize you with image filtering and frequency representations. The goal is to create hybrid images using the approach described in the SIGGRAPH 2006 paper by Oliva, Torralba, and Schyns. Hybrid images are static images that change in interpretation as a function of the viewing distance. The basic idea is that high frequency tends to dominate perception when it is available, but, at a distance, only the low frequency (smooth) part of the signal can be seen. By blending the high frequency portion of one image with the low-frequency portion of another, you get a hybrid image that leads to different interpretations at different distances.

Here, I have included two sample images (of me and my former cat Nutmeg) and some starter code that can be used to load two images and align them. The alignment is important because it affects the perceptual grouping (read the paper for details).

First, you'll need to get a few pairs of images that you want to make into hybrid images. You can use the sample images for debugging, but you should use your own images in your results. Then, you will need to write code to low-pass filter one image, high-pass filter the second image, and add (or average) the two images. For a low-pass filter, Oliva et al. suggest using a standard 2D Gaussian filter. For a high-pass filter, they suggest using the impulse filter minus the Gaussian filter (which can be computed by subtracting the Gaussian-filtered image from the original). The cutoff-frequency of each filter should be chosen with some experimentation.

For your favorite result, you should also illustrate the process through frequency analysis. Show the log magnitude of the Fourier transform of the two input images, the filtered images, and the hybrid image. In Matlab, you can compute and display the 2D Fourier transform with with: imagesc(log(abs(fftshift(fft2(gray_image)))))

Try creating a variety of types of hybrid images (change of expression, morph between different objects, change over time, etc.). The site has several examples that may inspire.

You may sometimes find that your photographs do not quite have the vivid colors or contrast that you remember seeing. In this part of the project, we'll look at three simple types of enhancement. You can do two out of three of these. The third is worth 10 pts as a bells and whistle.

Contrast Enhancement: The goal is to improve the contrast of the images. The poor constrast could be due to blurring in the capture process or due to the intensities not covering the full range. Choose an image (ideally one of yours, but from web is ok) that has poor contrast and fix the problem. Potential fixes include Laplacian filtering, gamma correction, and histogram equalization. Explain why you chose your solution.

Color Enhancement: Now, how to make the colors brighter? You'll find that if you just add some constant to all of the pixel values or multiply them by some factor, you'll make the images lighter, but the colors won't be more vivid. The trick is to work in the correct color space. Convert the images to HSV color space and divide into hue, saturation, and value channels ([h, s, v] = rgb2hsv(im) in Matlab). Then manipulate the appropriate channel(s) to make the colors (but not the intensity) brighter. Note that you want the values to map between 0 and 1, so you shouldn't just add or multiply with some constant. Show this with at least one photograph. Show the original and enhanced images and explain your method.

Color Shift: Take an image of your choice and create two color-modified versions that are (a) more red; (b) less yellow. Show the original and two modified images and explain how you did it and what color space you've used. Note that you should not change the luminance of the photograph (i.e., don't make it more red just by increasing the values of the red channel). I've included code in hybrid.zip for converting between RGB and LAB spaces, in case you want to use LAB space.

To turn in your assignment, place your index.html file and any supporting media in your project directory. On Compass, you will also submit code, a thumbnail, and text outlining how many points you think you should get. See project instructions for details.

Use words and images to show us what you've done (the web page doesn't need to be fancy). Please:

The core assignment is worth 100 points, as follows:

You can also earn up to 30 extra points for the bells & whistles mentioned above (5 for experimenting with color; 15 for Gaussian/Laplacian pyramids; 10 for third task of color enhancement) or suggest your own extensions (check with prof first).